The Future of Automated Testing: Code vs Language AI

E2E testing is shifting to natural-language AI agents while integration tests still need deterministic code. Where does each layer of testing belong — and do we actually agree on what 'right' looks like?

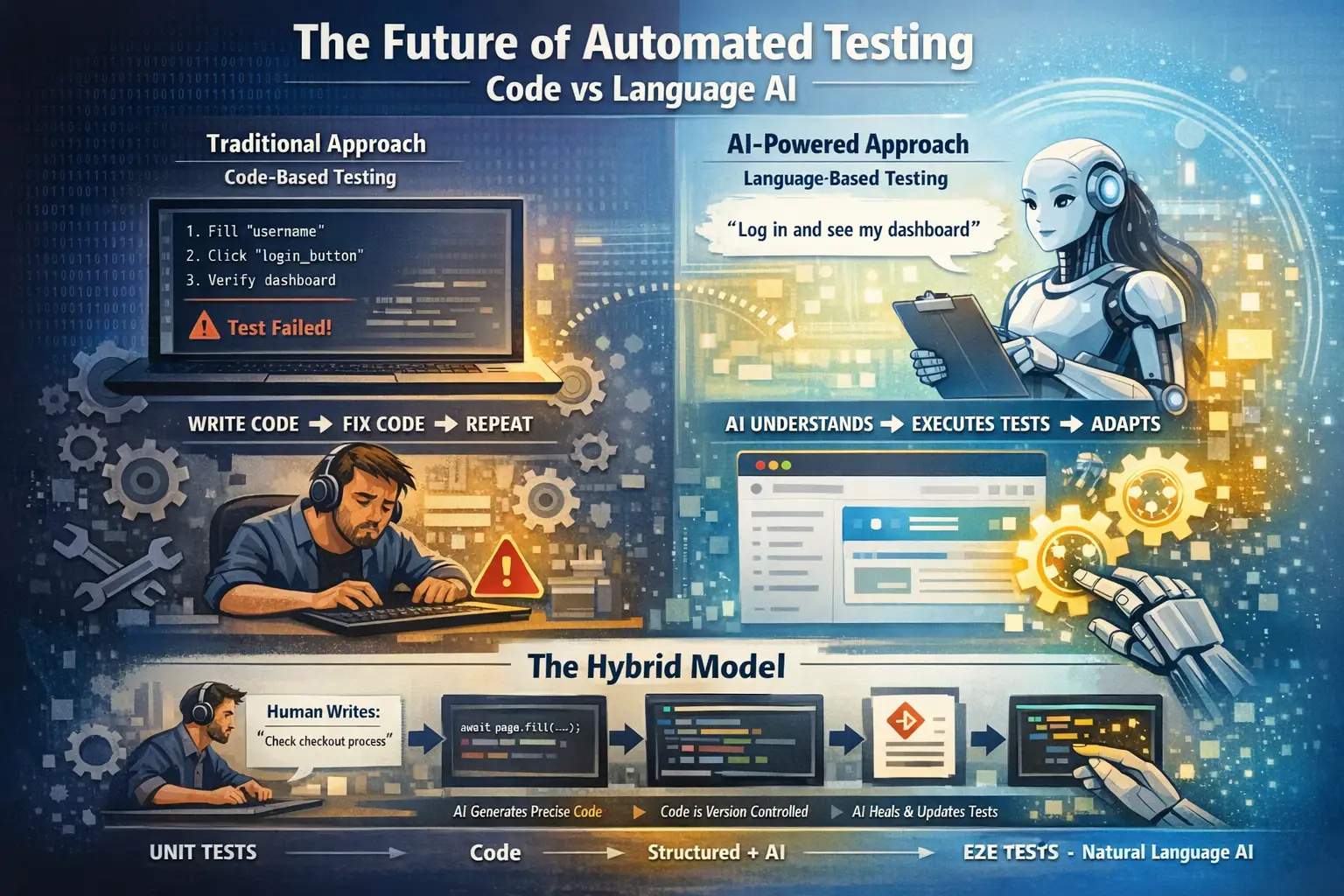

For decades, automated testing meant writing code. Developers wrote test scripts, maintained them, and fixed them when they broke. It worked — but it was expensive, slow, and required specialist knowledge. AI is now challenging every assumption behind that model, and the honest answer to "which layer should use code, and which should use natural language?" is not yet settled.

E2E Testing — Language AI Is Winning

End-to-end (E2E) tests simulate real users. Users do not think in code. They think in outcomes:

"I want to log in and see my dashboard."

Language AI aligns perfectly with this:

Traditional approach:

Human intent → translate to code → code breaks → fix code → repeat

AI approach:

Human intent → AI executes intent directly → UI changes → AI adaptsThe biggest problem with traditional E2E tests was always maintenance. UIs change constantly. Every change broke tests. Teams spent more time fixing tests than writing features. Language AI helps here because it focuses on intent, not implementation: if a button moves the agent still finds it; if an element ID changes, the agent does not care.

Tools like Playwright MCP, QA.tech, and Checksum.ai are already proving this in production. The direction is clear — E2E tests are moving toward natural-language scenarios executed by AI agents.

The remaining concern is non-determinism. An AI agent may interpret a scenario slightly differently on each run. That is a real problem for critical paths where exact behaviour matters. The solution emerging is a hybrid: AI generates Playwright code from natural language, which is then version-controlled and executed deterministically. Best of both worlds — human-readable intent, deterministic execution.

Integration Testing — Code Still Holds (For Now)

Integration tests are fundamentally different. They verify technical contracts:

- Does this endpoint return status 201?

- Does this database row have the correct value?

- Does this queue receive the correct message?

These are precise by definition. There is no room for interpretation. A wrong status code is a wrong status code regardless of intent.

Natural language loses precision exactly where integration tests need it most:

"User should be created successfully"

↓

Which table? Which columns? Which values?

Status 200 or 201? Response body shape?Structured formats like YAML sit in the middle — readable but precise:

endpoint: POST /api/users

expect:

status: 201

body:

email: test@example.comBut even YAML still needs a code runner underneath. The abstraction adds readability without removing the need for precision.

The honest assessment — integration tests will stay closer to code or structured formats for the foreseeable future. The determinism requirement is too important to sacrifice.

The Real Future — A Three-Layer Model

The industry is converging on a clear separation of concerns across the testing pyramid:

Layer 1 — Unit Tests

└── Always code

Fast, isolated, deterministic

Dev writes alongside feature code

No AI execution needed

Layer 2 — Integration Tests

└── Structured format (YAML / code)

Precise contracts

AI generates, humans verify

Deterministic execution

Layer 3 — E2E Tests

└── Natural language scenarios

Intent driven, self healing

AI executes via browser agents

Non determinism managed by running multiple timesThe Hybrid Model Emerging

The most promising direction is not choosing between code and language — it is using language as the input and code as the output:

Human writes plain-English scenario

↓

AI generates precise test code

↓

Code is version controlled

↓

AI heals code when application changes

↓

Human reviews healed tests periodicallyHumans own the what. AI owns the how and the maintenance. This removes the biggest cost of traditional testing — the maintenance burden — while keeping the reliability of code execution.

Insight From Real-World Experience

Metropolis, which built a multi-agent E2E test generation system, found that generic AI test agents underperform. Agents with deep codebase context and project-specific knowledge produced dramatically better tests. The future is not a generic AI test tool — it is an AI testing agent that deeply understands your specific application, standards, and patterns.

Bottom Line

| Now | Future | |

|---|---|---|

| Unit tests | Code | Code |

| Integration tests | Code | Structured + AI-generated |

| E2E tests | Code (brittle) | Natural language + AI-executed |

| Test maintenance | Human | AI self-healing |

| Test authoring | Developer only | Anyone |

| Execution | Deterministic | Deterministic (AI generates code) |

The future of testing is not AI replacing test code. It is AI removing the need for humans to write and maintain test code. Humans define intent. AI handles everything else.

Frequently Asked Questions

Will AI replace Playwright and Cypress?

No — at least not at the execution layer. AI agents are most useful authoring and healing tests; the runtime that actually clicks buttons is still most reliable when it is deterministic code. Playwright in particular is becoming the execution target for AI-generated E2E tests, not their replacement.

Are natural-language E2E tests safe for critical paths?

Not on their own. For anything money-moving or compliance-sensitive, generate the Playwright code from the natural-language scenario, review it, and pin it in version control. Keep the natural language as the spec; the code is the contract.

Should integration tests be written in natural language?

Generally no. Integration tests verify exact contracts (status codes, schemas, DB state) where interpretation is a bug. Structured formats like YAML with AI-assisted authoring give you readability without sacrificing precision.

Who owns a production regression when AI wrote and healed the test?

The team shipping the feature still owns the regression. AI authorship does not transfer accountability. Treat AI-generated tests the way you treat a dependency — you are responsible for what you adopt.

What makes an AI testing agent actually good?

Deep project context. Generic AI testing tools underperform; agents that know your codebase, conventions, and domain-specific edge cases produce tests engineers actually trust.

Do You Agree?

This is a debate worth having in the open. Some engineers believe E2E will always need code. Others think unit tests are next on the AI chopping block. Reasonable people disagree.

The questions we keep asking ourselves:

- Is non-determinism in E2E tests acceptable if it catches more real-world bugs?

- Should AI-generated test code be reviewed line by line, or treated like a dependency?

- If AI writes and heals tests, who owns the regression when it fails in production?

- Does the three-layer model hold — or is integration testing the next layer to fall to natural language?

We'd love to hear where you stand. Drop us a line — we're building in this space, and every honest counter-argument sharpens the answer.

Related posts

AI agents finish in hours what a responsible human takes a week to review and authorize. The fix is not reading faster — it is reversibility, verifying properties over comprehension, and risk-proportional trust.

CI/CD pipelines are deterministic. AI agents can reason. Do we still need pipelines when agents can choose what to run and triage failures — or is determinism the one thing agents can't replace?