Will AI Agents Replace CI/CD Pipelines — Or Work Alongside Them?

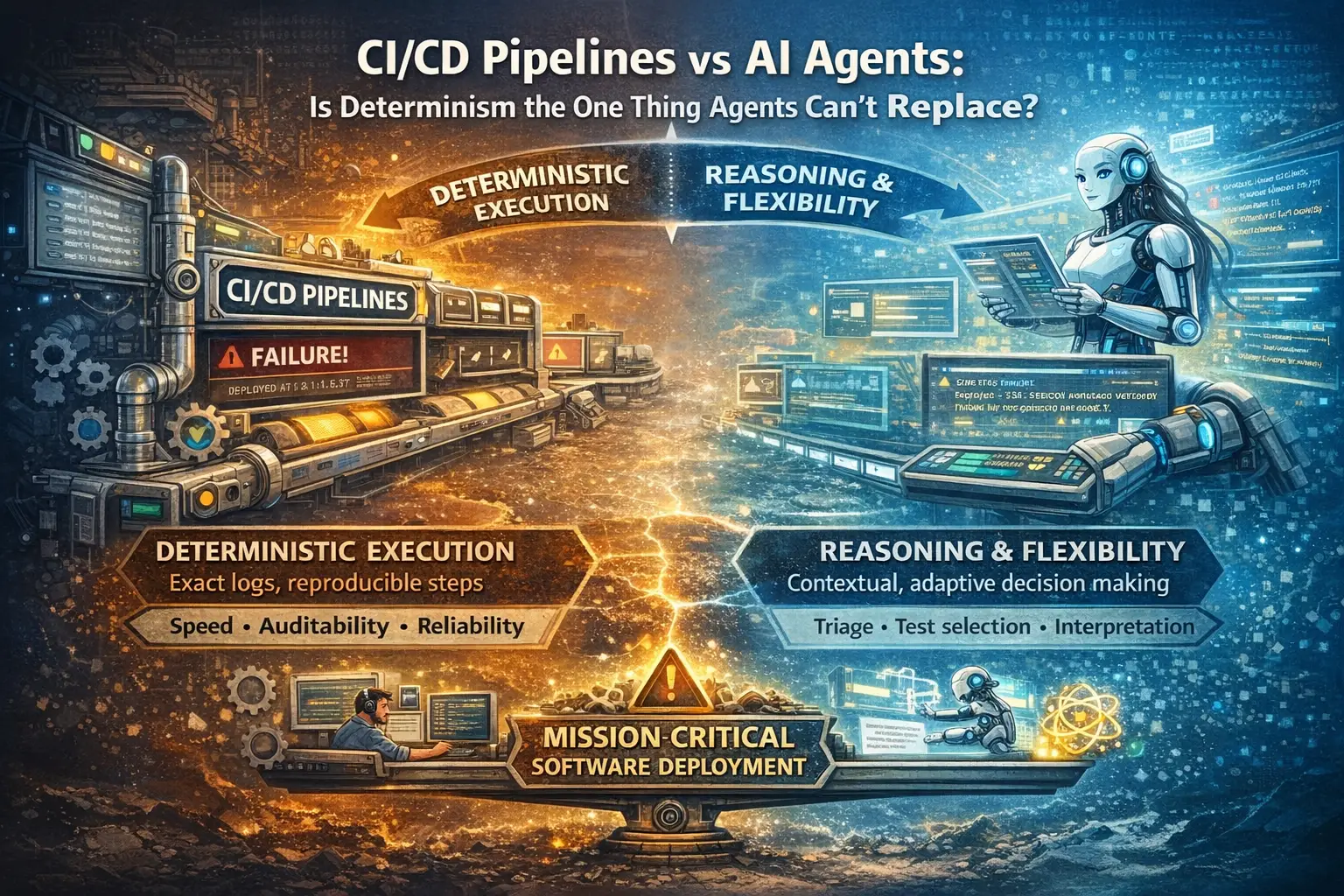

CI/CD pipelines are deterministic. AI agents can reason. Do we still need pipelines when agents can choose what to run and triage failures — or is determinism the one thing agents can't replace?

There is a question quietly gaining momentum in software engineering circles. As AI agents become more capable — writing code, reviewing PRs, interpreting test results — a natural question emerges: do we still need pipelines?

The answer is more nuanced than most people expect. And getting it wrong will cost you.

What Pipelines Were Built To Do

CI/CD pipelines are one of the great engineering achievements of the last two decades. They took something chaotic — shipping software — and made it deterministic. You push code. The pipeline runs. It tells you exactly what passed, what failed, and at what step. Every time. Without ambiguity.

That reliability is not accidental. Pipelines are essentially sequential scripts triggered by events. They do not think. They do not interpret. They execute. And that rigidity — which feels like a limitation — is actually their greatest strength.

When a pipeline tells you step 4 failed at 14:32 on Tuesday, you can trust that. You can audit it. You can reproduce it. In regulated industries, that audit trail is not a nice-to-have — it is a legal requirement.

What AI Agents Do Differently

AI agents operate on a completely different paradigm. Where pipelines execute, agents reason. Where pipelines follow rules, agents apply context. Where pipelines report results, agents interpret meaning.

Consider a test failure. A pipeline tells you: "Test user_login_spec failed. Expected 200, received 401." Useful, but limited — you still have to figure out why.

An agent can go further. It reads the stack trace. It checks what changed in the last deployment. It scans the error logs. It cross-references historic incidents. It tells you: "This failure pattern matches an incident from three months ago. The root cause was an expired JWT secret. The same secret was rotated in yesterday's deployment. This is almost certainly the same issue."

That is a fundamentally different kind of output. Not just what happened — but why, and what to do about it.

The Case For Replacement

The argument for agents replacing pipelines goes like this: pipelines are rigid because they have to be. They cannot adapt because they have no intelligence. Every edge case requires a human to update the pipeline configuration. Every new scenario requires new rules. At scale, pipeline maintenance becomes a full-time job.

Agents, by contrast, adapt. They understand context. They can decide which tests to run based on what actually changed in a PR rather than running the entire suite every time. They can spot flaky tests and quarantine them automatically. They can make deployment decisions based on a holistic view of system health rather than a binary pass/fail gate.

Proponents argue that as agents become more reliable and faster, the case for keeping pipelines — with their maintenance burden and rigid configurations — weakens significantly.

The Case Against Replacement

The counter-argument is rooted in something pipelines have that agents fundamentally lack: determinism.

Non-determinism is manageable in many contexts. It is dangerous in deployment pipelines. When you are deciding whether to ship code to production, you need certainty. "The agent decided it was probably fine to deploy" is not an acceptable audit trail. "All 847 tests passed. Pipeline completed in 4m 32s. Deployed at 14:47." is.

There are three other problems with full replacement:

Speed. AI inference adds latency. A pipeline step that runs in milliseconds becomes a multi-second operation when routed through a language model. At the scale of thousands of daily deployments, this compounds into significant delays.

Trust. Pipelines — whether GitHub Actions, Jenkins, CircleCI, or Azure Pipelines — have been battle-tested for decades. The tooling, the debugging approaches, the failure modes are all well understood. Agent failures are harder to reason about, and when an agent makes a wrong decision, understanding why is often opaque.

Debuggability. When a pipeline fails, you get a log. Line by line. Step by step. When an agent makes a wrong call, you are dealing with probabilistic reasoning that is inherently harder to trace. "Why did the agent decide not to deploy?" is a significantly harder question than "Which step failed?"

Pipelines vs Agents at a Glance

| CI/CD Pipelines | AI Agents | |

|---|---|---|

| Execution model | Deterministic scripts | Probabilistic reasoning |

| Speed | Milliseconds per step | Seconds per reasoning call |

| Auditability | Complete line-by-line logs | Opaque decision trace |

| Adaptability | Low — every edge case needs config | High — reads context, adapts |

| Maintenance | Human-heavy | Self-updating with context |

| Best for | Deployment gates, compliance | Triage, test selection, interpretation |

The Real Future — Agents Wrapping Pipelines

The most honest answer is that this is not an either/or question. The future is agents and pipelines working in a layered architecture — each doing what they do best.

Agent Orchestration Layer

Decides what to run, when, and why

↓

Pipeline Execution Layer

Runs deterministically, produces reliable results

↓

Agent Interpretation Layer

Reads results, triages failures, routes to the right placeIn this model, the pipeline becomes the muscle. The agent becomes the brain.

Agents wrap the pipeline at both ends. Before execution, an agent decides intelligently which tests to run — not blindly running the full suite, but selecting tests based on what changed. After execution, an agent interprets the results — not just reporting pass/fail, but understanding what a failure means and what should happen next.

The pipeline itself remains untouched. Deterministic. Fast. Auditable. But it is no longer operating in isolation — it is now one component in a larger intelligent system.

Where Agents Are Already Winning

Even today, there are specific pipeline responsibilities that agents handle better:

Flaky test detection. A pipeline marks a test as failed. An agent recognises it as a test that has failed intermittently seventeen times in the last month and quarantines it automatically rather than blocking the build.

Intelligent test selection. Rather than running 2,000 tests on every PR, an agent reads the diff and selects the 200 tests actually relevant to what changed. Same coverage, a fraction of the time.

Failure triage. An agent that understands the codebase, the deployment history, and the incident history can route a failure to the right team with the right context in seconds — something that previously required human intervention.

Deployment readiness. Rather than a binary gate, an agent can assess overall system health, recent incident patterns, and business context before recommending a deployment.

What This Means For Engineering Teams

Teams that treat this as a replacement question will make the wrong bets. Teams that ask "where does reasoning add value that determinism cannot?" will build the right systems.

The practical path forward is incremental. Start by wrapping your existing pipeline with agent intelligence at the interpretation layer. Let the agent triage failures and route them intelligently. Measure the time saved. Then gradually move agent intelligence into the orchestration layer — smarter test selection, smarter deployment decisions.

The pipeline does not go away. It becomes more valuable because it is now directed by intelligence rather than operating blindly.

Frequently Asked Questions

Will AI agents replace CI/CD pipelines entirely?

No — not for deployment-critical workflows. Pipelines give you deterministic, auditable execution, which is non-negotiable in regulated or high-stakes environments. Agents elevate pipelines by adding reasoning around them, not by taking their place.

Can an agent decision satisfy a compliance audit?

Generally not on its own. Auditors ask "exactly what ran, when, and with what result?" — a pipeline log answers that deterministically. Agent reasoning, being probabilistic, leaves a weaker trail. The pragmatic pattern is to let agents decide and route, but have pipelines execute and log the actual steps.

Where do agents actually win today?

Four places are already proven: flaky test detection, intelligent test selection based on diff context, failure triage with incident history, and deployment readiness that goes beyond a binary pass/fail.

What's the fastest way to introduce agents into an existing pipeline?

Start at the interpretation layer — point an agent at your pipeline's output and let it triage failures. No pipeline-config changes, no risk to deployment determinism, and measurable time savings. Once that is trusted, expand the agent upstream into test selection and orchestration.

How do we debug an agent's wrong decision?

This is still an open problem. Best current practice: log the agent's inputs (diff, test history, recent incidents) and its reasoning trace, then treat agent calls like any other deterministic step — versioned, replayable, and reviewable. Without that discipline, agent failures become impossible to audit.

The Bottom Line

Pipelines will not be replaced by agents. They will be elevated by them.

The determinism, speed, and auditability of pipelines are not weaknesses to be overcome — they are properties to be preserved and built upon. Agents bring the reasoning and contextual intelligence that pipelines have always lacked.

The teams that will win are not those who abandon their pipelines for agent-driven workflows, nor those who ignore agents and keep running brittle, high-maintenance pipeline configurations. The winners will be those who understand that pipelines and agents are complementary — that the pipeline is the execution layer, the agent is the intelligence layer, and that together they are dramatically more powerful than either alone.

The question was never agents or pipelines. It was always agents and pipelines — and how to make them work together.

Do You Agree?

This is where reasonable engineers disagree, and where the next two years of DevOps will be decided. A few questions we keep returning to:

- Is "the agent decided to deploy" ever an acceptable audit trail?

- At what point does agent latency in an orchestration layer offset the speed savings from smarter test selection?

- Do flaky-test quarantine agents hide real regressions?

- Should agent decisions in deployment paths require human approval, or is logging and replay enough?

We're building in this space. If you have a counter-argument — especially one rooted in production pain — we'd like to hear it. Come build with us.

Related posts

AI agents finish in hours what a responsible human takes a week to review and authorize. The fix is not reading faster — it is reversibility, verifying properties over comprehension, and risk-proportional trust.

E2E testing is shifting to natural-language AI agents while integration tests still need deterministic code. Where does each layer of testing belong — and do we actually agree on what 'right' looks like?